Table of Contents

Notes from AcqFest (2007/07)

Proposed agenda

Day 1 - FROM THE MOUSE'S LAIR

- OFBiz training video(s) -> how it works : http://docs.ofbiz.org/display/OFBTECH/Framework+Introduction+Videos+and+Diagrams

- calendar -> goals & deadlines (essential for art!)

- customizing -> purchasing & invoicing

- workflow for acq discussion

Day 2 - INTO THE RAT'S NEST

- serials -> module from Hell

- ERM -> how/if it should fit

Day 3 - AND ON INTO THE LIGHT

- more on serials (?)

- internationalization (i18n)

- ideas on authorities (?)

Serials

Despite the suggested order of discussion, we jumped rapidly into the rat's nest of serials. Call us masochists.

idea: scan UPC's to simplify receiving workflow ;)

Manufacturing module in OFBiz is a good model for interacting with scheduler. Talk to calendar server.

SerialsThing

Maybe Evergreen serials acquisitions could use a SerialsThing algorithm? Traditional serials acquisitions systems have been built around the notion that there is a calendar-like model that drives a report of issues that should have arrived when a particular issue should be claimed. Instead, a collaborative model would allow libraries to reuse patterns, claim periods / policies for given serials, find out how many other libraries have already received a given issue and trigger claiming accordingly…

Useful shared serials properties:

- Title

- Publisher

- Publishing pattern

- Publisher claiming policies

- Subscription rates through various vendors / for various library sizes

Standards for expressing serials patterns

- MARC 21 Captions and Patterns Fields (853-855)

- ONIX for Serials Coverage (draft released September 2007)

o Theoretically publishers will actually start publishing information using this standard

ISSN

The ISSN is the standardized international code which allows the identification of any serial publication, including electronic serials, independently of its country of publication, of its language or alphabet, of its frequency, medium, etc. The ISSN number,therefore, preceded by these letters, and appears as two groups of four digits, separated by a hyphen , has no signification in itself and does not contain in itself any information referring to the origin or contents of the publication.

(quote from http://www.issn.org/en/node/60)

ISSN numbers are assigned by the ISSN national Centres coordinated in a network. All ISSN are accessible via the ISSN Register. The ISSN is not "just another administrative number". The ISSN should be as basic a part of a serial as the title.

- As a standard numeric identification code, the ISSN is eminently suitable for computer use in fulfilling the need for file update and linkage, retrieval and transmittal of data.

- As a human readable code, the ISSN also results in accurate citing of serials by scholars, researchers, information scientists and librarians.

- In libraries, the ISSN is used for identifying titles, ordering and checking in, claiming serials, interlibrary-loan, union catalog reporting etc.

- ISSN is a fundamental tool for efficient document delivery. ISSN provides a useful and economical method of communication between publishers and suppliers, making trade distribution systems faster and more efficient, in particular through the use of bar-coding and EDI (electronic data interchange).

http://www.lcweb.loc.gov/issn/ – LC is the US national center under the NSDP - National Serials Data Program

Serial patterns and claiming period data

Serials pattern frequencies for Laurentian University (top 12)

| Count | Pattern description |

|---|---|

| 687 | Every 3 months, on the 1st day of the month |

| 579 | Every 3 months, on the 31st day of the month |

| 471 | Annually on the 31st day of December |

| 443 | Annually on the 2nd day of January |

| 400 | Monthly, on the 31st day of the month |

| 238 | Every 2 months, on the 1st day of the month |

| 215 | Every 6 months, on the 31st day of the month |

| 211 | Monthly, on the 1st day of the month |

| 200 | Every 2 months, on the 31st day of the month |

| 161 | Annually on the 31st day of January and the 31st day of July |

| 125 | Every 4 months, on the 1st day of the month |

| 124 | Weekly, on the 4th day of the month |

Claim periods at Laurentian University

| Count | Claim period (days) |

|---|---|

| 2207 | 60 |

| 794 | 30 |

| 564 | 21 |

| 304 | 15 |

| 202 | 17 |

| 192 | 45 |

| 181 | 90 |

| 94 | 10 |

| 92 | 7 |

| 17 | 4 |

| 10 | 20 |

| 5 | 40 |

| 5 | 180 |

| 4 | 9 |

| 4 | 14 |

| 2 | 5 |

| 2 | 29 |

| 1 | 8 |

| 1 | 16 |

| 1 | 120 |

3 classes of serial patterns

- Regular: easily predicted (e.g. weekly, monthly, quarterly, annually)

- Slightly irregular: requires overlay of two or more patterns / anti-patterns to derive a pattern using set operators for union, intersections, difference, etc. For example:

- Suppose PC Magazine is published 14 times a year; this would be represented as:

- monthly pattern (first week of every month)

- + annual pattern (third week of August)

- + annual pattern (third week of December)

- Suppose Communications of the ACM is published 11 times a year:

- monthly pattern (second week of every month)

- - annual pattern (December)

- Completely irregular: if the pattern is truly unpredictable, simply treat it as a monograph with multiple volumes. If the title of the serial changes, use MARC linking entry fields (e.g. 780 "preceding entry", 785 "succeeding entry"); Evergreen can then walk the chain to present a complete set of these serials.

Action: to determine the most common serials patterns to present in an interface, Dan, Art, GPLS(?), BCPL(?) will extract the patterns for all of their serials.

Calendar & Scheduling Logic

Rather than step into the extremely messy world of writing code for scheduling recurring events, let's consider reusing the existing solutions for that problem–CalDAV calendar servers–that have happily sprung up in the last five years. Therefore, we started looking at CalDAV clients and servers for scheduling user interfaces and date/time logic. Using an existing standard opens up possibilities for:

- iCal export/import (e.g. publish all serials schedules for your library on Google Calendar, enable patrons to subscribe to a schedule for the serial / library combination in which they are interested)

- UI flexibility

Having a calendar server would also help solve other Evergreen needs, such as room & equipment booking, displaying library days + hours of operation, importing institutional / state / provincial / federal holiday schedules.

So we could pick a CalDAV server, and maybe plug-in the Lightning extension for Thunderbird (probably not, as it sounds like it truly requires Tbird) or some pure Web UI client into the staff client for scheduling purposes. Some calendar servers:

- Darwin Calendar Server (home, article)

- Uses SQLite + Zope (portability) - SQLite is already used by the Django admin interface, so miker (shockingly) had no objections to requiring it elsewhere in the infrastructure.

- Bedework (was UW Calendar) (http://www.bedework.org/)

- Uses Hibernate, supports HSQLdb, MySQL 5, Oracle 10

- PostgreSQL is unsupported (probably "because of a lack of familiarity")

- on acqfest machine: http://acq.open-ils.org:8080/bedework/

- Really Simple CalDAV Store (home, Sourceforge Home )

- Implemented in PHP + PostgreSQL

- Might be able to simply reuse the PostgreSQL functions they defined

- Cosmo (home)

- iCal

- WebDAV

- CalDAV

- JSR 170

- Apache Software Foundation

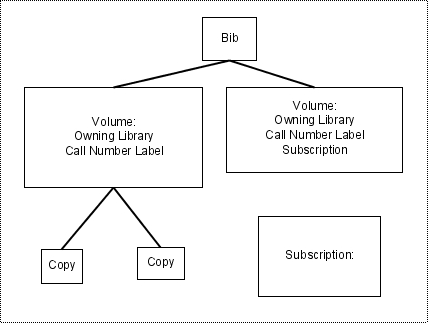

Fitting subscription objects into the Evergreen object model

A Subscription object:

- belongs to a Volume/CallNumber

- is the entry point into the-calendaring-app

- optionally contains a PO for linking into the acquisitions system for budgeting / accounts payable

Acquisitions

Requests

Assumption that end-to-end request tracking will be part of acquisitions. Possible workflow:

- Patron searches for known item; can't find it; clicks on "Can't find what you're looking for? Ask us to get it for you!"

- Patron signs into My OPAC

- Patron enters as much citation info as possible (ISBN, ISSN, title, author…) – or fire off a search against other sources (Amazon? Z39.50 targets) and select the one you want? This would mesh well with the vendor discovery API functionality we've envisioned, and could simplify placing orders. It would also give more feedback to the patron about the cost of the item.

- Request gets routed to subject collection developer person responsible for that area (if we can get Dewey or LC then routing could be automated by subject area; otherwise some manual mediation may be required)

- If more information about the request is required, subject collection developer contacts requester.

- Subject collection developer approves request, rejects request; notification is sent to requester.

- Order is sent through acquisitions process. Automatic hold is placed on the requested item on behalf of the requester. Maybe we have goodness like RSS feeds for "my requests", etc.

From OPEN-ILS-DEV:

Please consider being able to set various types of limits on

the requests, like number of outstanding requests. Number of requests

in a 30 day period, etc. This might be useful for organizations that

want to reign in users that abuse the request feature, forcing the user

to choose their items carefully.

Also, it might be usefull to link certain users requests to a certain

funding source. For example, if Professor X, Professor Y, and Professor

Z used this to acquire new research materials, and they are all in the

same department, they would all be linked to that departments book

budget, and wouldn't be able to request materials with a total cost 10%

over their budget.... or something like that.

Josh

--

Lake Agassiz Regional Library - Moorhead MN larl.org

Josh Stompro | Office 218.233.3757 EXT-139

LARL Network Administrator | Cell 218.790.2110

Discovery

Ideally Evergreen will implement vendor discovery APIs that enable a person placing an order for an item to submit a search from within the staff client that will query one or more vendors and enable the person placing the order to choose the preferred vendor (based on item cost, or ship time, or format).

No effort will be placed on screen-scraping vendor Web sites for this kind of information. Only vendors that expose a discovery API will be supported. The reference implementation would probably be Amazon. Hopefully vendors will realize the benefits of enabling a customer to search for items from within the Evergreen staff client and minimize the current copy and paste drudgery required between Web browser and ILS outweigh the increased competition vendors will face if these cross-vendor search results can easily be compared.

A simple discovery API could be built on OpenSearch with result enrichment for price / estimated ship date by shipping method / format. Some authentication may be required to enable the vendor to calculate discounts for customers.

Current workflow at Laurentian for finding an item to order:

- Technician logs into vendor #1 Web site, searches for item. If item found, technician orders the item.

- If item is not found, technician logs into vendor #2 Web site, searches for item. If item found, technician orders the item.

- If item is still not found, technician logs into vendor #3 Web site, searches for item. If item found, technician orders the item.

- If item is still not found, technician searches Advanced Book Exchange and places the order.

No attempt is made at Laurentian to find the best price or best ship time estimate.

Potential workflow with integrated vendor discovery:

- Log into staff client and select Acquisitions->Order an item.

- Enter desired search criteria (ISBN, title, author, format, etc). Results populate standard table widget, making it sortable by field (title, author, format, price, ship time).

- Select desired order and click Place order. In the backend, Woodchip will record the details of the order (price / title / etc), generate a PO#, and submit that order to the vendor via EDI.

- Question: Does Woodchip check unencumbered balance for the given fund at this point and block or warn before allowing the order to proceed?

Of course, not all orders will be able to be submitted via the integrated vendor discovery; so Evergreen will still have to support manual order placement.

Order requests could be fed in directly from the entirely theoretical requests functionality of Evergreen; depending on the amount of bibliographic information in the request, search could be fired off automatically or augmented by the human.

For LU, collection librarian requests might simply be placed directly as an order by the librarian themselves, rather than the current paper loop of librarian -> acq office -> technician.

Reporting

Mike gave a nice walk through of the current reporting interface in the staff client. To generate a report, you start by creating a report template and then create a report based on that template. The reporting interface enables you to browse through the database schema, defaulting to the most commonly required views but giving you the ability to access any field from any table. You can choose fields from various views, apply transforms to those fields, filter output based on those fields or other fields. Output formats are CSV, Excel, HTML, as well as basic line and bar charts.

More complex reporting could be performed by exporting data in CSV format and pulling the data into Eclipse BIRT. Enhancements

- Currently the reporting interface provides access to the entire database, with the option of filtering by org_unit. Should be possible to lock down the templates so that this option is removed for those sites that require it.

- Move more of the interface into XUL rather than current ad-hoc interface that's still dynamic HTML.

- give Z39 search service async, multi-server support

- OpenSRF Date service?